Robots txt example11/3/2023

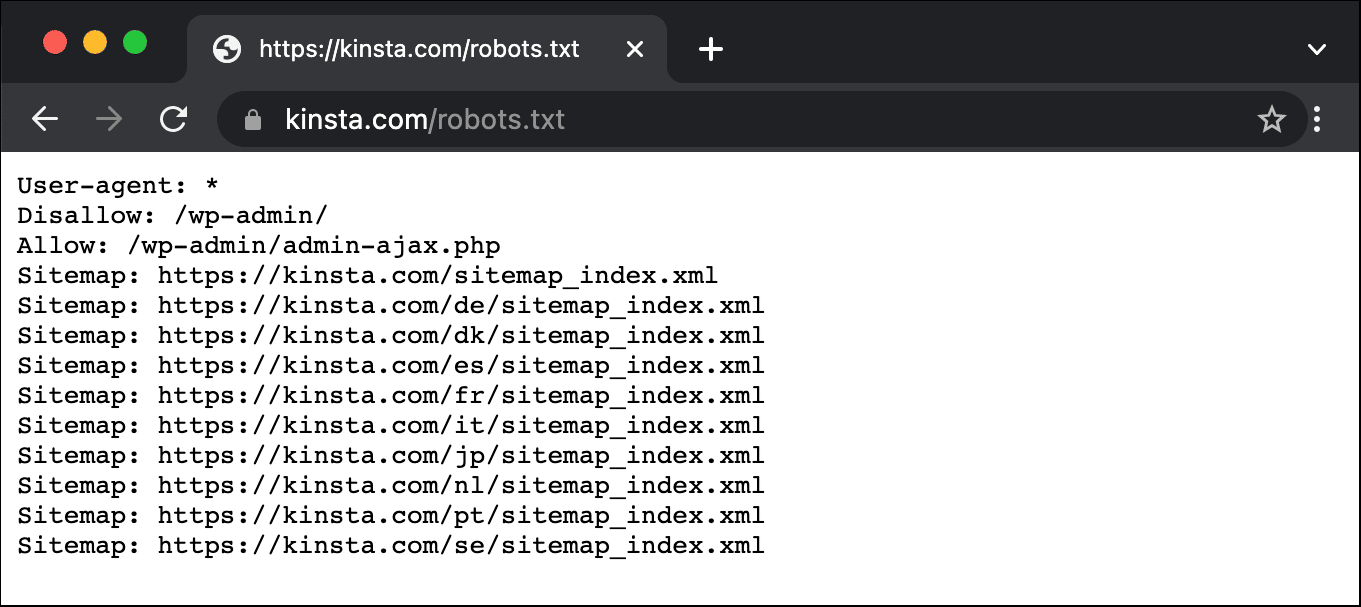

# 6 6 # irresponsible, your access to the site may be blocked. # 5 5 # some misbehaved spiders out there that go _way_ too fast. # 4 4 # Please note: There are a lot of pages on this site, and there are If you're\n# irresponsible, your access to the site may be blocked.\n#\n\n# advertising-related bots:\nUser-agent: Mediapartners-Google*\n\n" # "#\n# robots.txt for and friends\n#\n# Please note: There are a lot of pages on this site, and there are\n# some misbehaved spiders out there that go _way_ too fast. In the last block all bots are asked to pause at least 5 seconds between two visits. PrettyBot likes shiny stuff and therefor gets a special permission for everything contained in the “ /shinystuff/” folder while all other restrictions still hold. Those two get the previous permissions lifted by being disallowed nothing. The next block concerns GoodBot and NiceBot. The file below starts with a comment line followed by a line disallowing access to any content – everything that is contained in root (“ /”) – for all bots. Let us have an example file to get an idea how a robots.txt file might look like. Possible field names are: user-agent, disallow, allow, crawl-delay, sitemap, and host. Everything after # until the end of line is regarded a comment. # serves to comment lines and parts of lines. Blocks are separated by blank lines and the omission of a user-agent field (which directly corresponds to the HTTP user-agent field) is seen as referring to all bots. lines to indicate the scope of the following rule block. The syntax of the files in essence follows a fieldname: value scheme with optional preceding user-agent. rules for googlebot).Īs the name of the files already suggests robots.txt files are plain text and always found at the root of a domain. , ) and bots from Google, Yahoo and the like will adhere to the rules defined in robots.txt files - although, their interpretation of those rules might differ (e.g.

Nonetheless, the use of robots.txt files is widespread (e.g. The de facto ‘standard’ never made it beyond a informal “Network Working Group INTERNET DRAFT”. Robots.txt files are a way to kindly ask webbots, spiders, crawlers, wanderers and the like to access or not access certain parts of a webpage.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed